The many power management, thermal, and ACPI updates have been merged for the Linux 7.0 kernel. As usual there are many changes coming from fixes to new hardware support and more expansive thermal control capabilities under Linux…

Category Archives: Linux

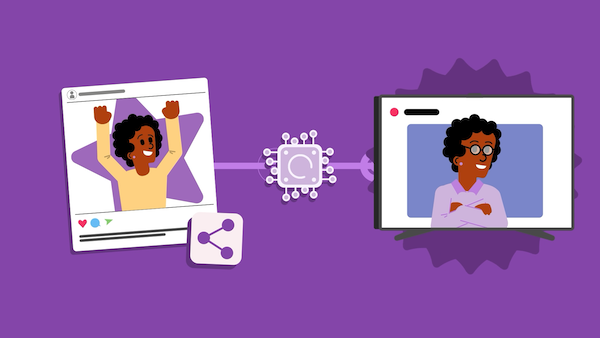

Helping young people stay safe online in the age of AI

The online world that young people navigate today is different from the one we encountered just a few years ago: the search engines, social media platforms and digital tools they use to find information, interact with friends and complete schoolwork are now deeply embedded with AI technologies.

While the core aims of online safety education remain the same, the scope must now expand to include AI literacy: the ability to use, question and navigate AI tools so young people can make responsible choices online.

This is a shared challenge for anyone who supports young people as they navigate the online world: parents and carers, youth leaders and volunteers, and educators across all subjects. Many young people use these AI tools independently, often without guidance, so having open and useful conversations about trust, risk and responsibility matter just as much in the classroom as they do at dinner tables and Code Clubs.

Why AI literacy is essential for staying safe online

At the Raspberry Pi Foundation, we have developed various AI literacy resources for educators, club leaders and parents to address this challenge in age-appropriate and practical ways. Our ‘AI safety’ resources, part of our Experience AI programme, are a set of free comprehensive teaching activities to support you in educating young people aged 11–14 in navigating key safety issues linked to AI, including privacy, misinformation, trust and responsibility. Delivered through videos, unplugged activities and discussions, the activities are adaptable to a range of learning settings, and reflect the real decisions young people are already making online.

For example, in the ‘Trusted Sources’ activity from the ‘Media literacy in the age of AI’ lesson, young people reflect on the ways they look for information related to schoolwork, news and in their free time. They consider which sources are likely to allow the use of generative AI and how that affects their trustworthiness. Rather than labelling sources as ‘good’ or ‘bad’, learners explore questions around responsibility, credibility and oversight, and build practical skills for fact-checking and staying safe online.

Supporting responsible use of generative AI through Experience AI

Alongside the ‘AI safety’ resources, we have also developed a new ‘Large Language Models (LLMs)’ unit for learners aged 11–13 and 14–17, currently being tested in classrooms. The unit focuses on another important aspect of online safety: how young people interact responsibly with AI tools that generate content. While helpful, learners’ uncritical use of these tools could lead to cognitive offloading and limit the development of their higher-order thinking skills. The confident, persuasive tone of LLMs can also make it harder for young people to judge accuracy, recognise bias or notice missing information in outputs.

To support the critical thinking skills that are essential for staying safe online, the new LLM unit includes research-informed lessons that explore how LLMs are created, why their outputs are not always accurate and how to evaluate AI-generated responses. The unit also encourages learners to reflect on when using an LLM is helpful to their learning, when it is not, and how they can remain in control of their own thinking, learning and skills development.

Starting the conversation this Safer Internet Day

Helping young people stay safe online in the age of AI doesn’t require having all the answers. Instead, it’s about creating the space to pause, question, and think critically about what they are encountering online. Through carefully designed, research-informed and pedagogically aligned AI literacy resources, we aim to help you start the conversations that empower young people to think critically, stay curious and remain in control of their learning and online lives.

This Safer Internet Day, we invite educators, parents and anyone who supports young people to explore our AI literacy resources and start the conversation. Visit the Experience AI website for more information.

The post Helping young people stay safe online in the age of AI appeared first on Raspberry Pi Foundation.

MythTV 36 Released With Web App Improvements & FFmpeg 8 Support

MythTV 36 is now available for this long-time open-source digital video recorder “DVR” software that has been around now for more than two decades as the leading choice for those wishing to watch and/or record live TV under Linux especially as an HTPC…

LLVM 22.1-rc3 Released – LLVM To Provide Windows ARM Release Binaries Moving Forward

We are nearing the stable release of LLVM 22 in hopefully two weeks. Out today is the third release candidate of LLVM 22.1 for soliciting more testing of this open-source compiler stack…

Linux 7.0 Brings An EFI Framebuffer Quirk For Valve’s Steam Deck

The EFI subsystem updates have been merged for the in-development Linux 7.0 kernel. Worth mentioning here is a new quirk for helping Valve’s Steam Deck handheld…

Kdenlive 25.12.2 Delivers Monitor Fixes and Dragging Refactor

Kdenlive 25.12.2 open-source video editor, is now available with new stability fixes, better monitor performance, and workflow improvements.

GNU Binutils 2.46 Adds Support for AMD Zen6 CPUs, SFrame v3, and More

The GNU Binutils project announced today the release and general availability of GNU Binutils 2.46 as the latest stable version of this collection of binary tools for GNU/Linux operating systems.

pearOS 26.2 Released with Liquid Gel Design, Wayland Session, and More

pearOS 26.2 has been released today as a major update to this up-and-coming GNU/Linux distribution featuring the KDE Plasma desktop environment and based on the popular Arch Linux distribution.

GNU Linux-Libre 6.19 Kernel Is Now Available for Software Freedom Lovers

The GNU Linux-libre project announced today the release and general availability of the GNU Linux-libre 6.19 kernel for those who seek 100% freedom for their GNU/Linux computers and software freedom lovers.

Decman Is a Declarative Package and Configuration Manager for Arch Linux

Decman provides a declarative way to manage Arch Linux systems, ensuring installed packages and configuration match a defined state.

More than 135,000 OpenClaw instances exposed to internet in latest vibe-coded disaster

By default, the bot listens on all network interfaces, and many users never change itIt’s a day with a name ending in Y, so you know what that means: Another OpenClaw cybersecurity disaster.…

Redox OS Gets Cargo & The Rust Compiler Running On This Open-Source OS

The Rust-written Redox OS open-source operating system is now able to leverage Cargo and the Rust compiler “rustc” itself running within this platform. Plus they also made a heck of a lot of other improvements too over the course of the past month. Today they published a status update to outline all of the promising advancements made to this independent OS so far in 2026…

AMD openSIL + Coreboot Being Ported To A Modern AM5 Consumer Motherboard

While we are very eager for the AMD openSIL open-source CPU silicon initialization project to achieve production readiness with Zen 6 platforms for ultimately replacing AGESA, there is some experimental excitement on the way for open-source firmware enthusiasts… OpenSIL and Coreboot are being brought to an AM5 motherboard you can buy retail…

Windows 11 vs. Ubuntu Linux Performance For Intel Core Ultra X7 Panther Lake

Last week I began publishing the many exciting Panther Lake benchmarks under Linux from the interesting CPU performance and efficiency to the much anticipated Xe3 graphics with the Intel Arc B390 graphics. Up today is a look at how the out-of-the-box performance for the Intel Core Ultra X7 358H compares under Microsoft Windows 11 and the current Ubuntu Linux 26.04 development state.

[$] Development statistics for 6.19

Linus Torvalds released

the 6.19 kernel on February 8, as expected. This development

cycle brought 14,344 non-merge changesets into the mainline, making it the

busiest release since 6.16 in July 2025. As usual, we have put together a

set of statistics on where these changes come from, along with a quick look

at how long new kernel developers stay around.

Windows 11 vs. Ubuntu Linux Performance For Intel Core Ultra X7 Panther Lake

Last week I began publishing the many exciting Panther Lake benchmarks under Linux from the interesting CPU performance and efficiency to the much anticipated Xe3 graphics with the Intel Arc B390 graphics. Up today is a look at how the out-of-the-box performance for the Intel Core Ultra X7 358H compares under Microsoft Windows 11 and the current Ubuntu Linux 26.04 development state.

New Rust Tool Traur Analyzes Arch AUR Packages for Hidden Risks

Traur is a new tool written in Rust that checks Arch AUR packages for hidden security risks before you install them.

Blender 5.1 Lands Raycast Nodes, Blender Adjusting Release Cycle Moving Forward

Two interesting bits of Blender news this week for those fond of this leading open-source 3D modeling software…

Offpunk 3.0 released

Version

3.0 of the Offpunk

offline-first, command-line web, Gemini, and

Gopher

browser has been released. Notable changes in this release include

integration of the unmerdify

library to “remove cruft

” from web sites, the xkcdpunk

standalone tool for viewing xkcd

comics in the terminal, and a cookies command to enable

browsing web sites (such as LWN.net) while being logged in.

Something wonderful happened on the road leading to 3.0: Offpunk

became a true cooperative effort. Offpunk 3.0 is probably the first

release that contains code I didn’t review line-by-line. Unmerdify (by

Vincent Jousse), all the translation infrastructure (by the

always-present JMCS), and the community packaging effort are areas for

which I barely touched the code.So, before anything else, I want to thank all the people involved

for sharing their energy and motivation. I’m very grateful for every

contribution the project received. I’m also really happy to see “old

names” replying from time to time on the mailing list. It makes me

feel like there’s an emerging Offpunk community where everybody can

contribute at their own pace.There were a lot of changes between 2.8 and 3.0, which probably

means some new bugs and some regressions. We count on you, yes, you!,

to report them and make 3.1 a lot more stable. It’s as easy at typing

“bugreport” in offpunk!

See the “Installing

Offpunk” page to get started.

Debian’s tag2upload considered stable

Sean Whitton has announced

that Debian’s tag2upload

service is now out of beta and ready for use by Debian developers and

maintainers.

During the beta we encountered only a few significant bugs. Now that

we’ve fixed those, our rate of successful uploads is hovering around

95%. Failures are almost always due to packaging inconsistencies that

older workflows don’t detect, and therefore only need fixing once per

package.We don’t think you need explicit approval from your co-maintainers

anymore. Your upload workflows can be different to your teammates.

They can be using dput, dgit or tag2upload.

LWN covered

tag2upload in July 2024.